What an AI sales tool taught me about product adoption

Audio overview

MY ROLE ON THE PROJECT

I led product design from early discovery to launch.

I worked closely with Product, Sales, CSM, and Data teams to:

Clarify the problem we were solving

Map how sales reps actually worked

Define what “good” recommendations looked like

Design the end-to-end experience

I owned the UX strategy, interaction design, and prototyping.

The Opportunity

To hit our 25/26 financial goals, the business wanted to:

The reality

From my early conversations with sales and CSM teams, I identified three main issues:

BEFORE AI CONTENT RECOMMENDER

How might we help reps recommend relevant content without relying so heavily on subject matter experts?

I worked with the PM to shape a core hypothesis:

"An AI assistant could help reps structure client needs and generate recommendations faster, reducing reliance on SMEs."

I designed an AI-powered recommender that allowed reps to:

AFTER AI CONTENT RECOMMENDER

To support different working styles, I explored three input methods:

We launched with the guided flow first, to improve input quality and reduce risk.

Given this dependency on input quality, I advocated for starting with a guided Q&A flow to structure inputs and reduce variability. This allowed us to test the concept with more reliable data before expanding to more flexible input methods.

Early results (first 6 weeks)

What Happened Over Time

As usage data came in, I noticed a gap between expected and actual behavior.

• Weekly usage started to decline, and many sessions contained incomplete or vague inputs.

• Reps were not consistently using the tool to build structured recommendations, instead defaulting to quicker, lower-effort interactions.

I worked with the team to review usage patterns and understand where the breakdown was happening. While initial assumptions pointed to AI quality, the data suggested a more complex issue tied to user behavior and trust.

A trust gap emerged

While reviewing usage logs and feedback, I noticed an unusual pattern from one of the Curriculum specialists.

And there was broader, growing tension

Our goal was to unify recommendations, but was perceived as something reducing control for sales and SMEs.

Investigating the root cause

To better understand this, I set up a 1:1 with the SME displaying a peculiar behaviour.

This stakeholder had not been actively engaging in discussions with product and engineering, but surprisingly, was open to speaking with me directly.

Through this conversation, I uncovered a key insight:

The real concern wasn’t that the AI was unreliable - it was that sales reps might rely on it too heavily without enough judgment.

This exposed a deeper problem:

They were also evaluating how reps would use it

Design Response: supporting trust and control

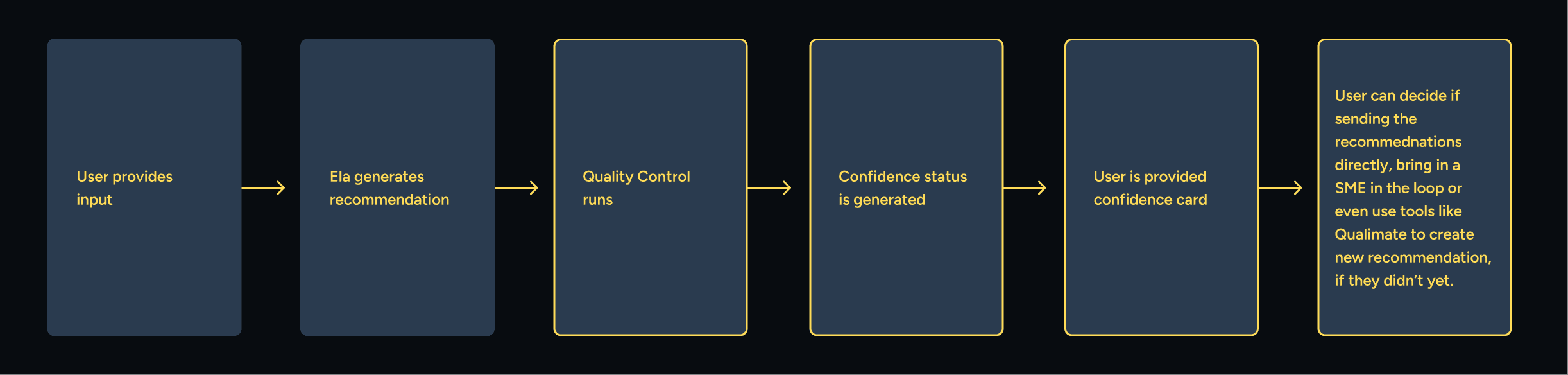

Based on this, I explored ways to better support decision-making and reduce risk. Ela should check input completeness and flag whether a recommendation is safe to share, somewhat safe, or requires expert review. This would balance speed with accountability, helping reps move faster and supporting their judgment.

The goal was not just to improve transparency, but to:

To move this beyond exploration, I also organised a working session with the SME, Product Manager, and Engineering Manager to align on the underlying trust issue and discuss potential approaches.

This shifted the conversation from focusing purely on AI accuracy to considering how to support responsible usage and build confidence in how recommendations are applied.

Outcome

While these solutions proposed resonated, they were not implemented. At the same time, broader adoption continued to decline due to:

Inconsistent support from sales leadership, existing reliance on alternative tools and misalignment between the product and established workflows

As a result, the product was eventually deprioritized.

What I Learned

This project reinforced that adoption of AI products depends as much on organizational context and user behavior as on the quality of the solution itself.

Even with early positive signals, the product relied on inputs, workflows, and incentives that were not consistently present. I learned that when a system depends on behavior change—such as structured data input or reduced reliance on SMEs—it requires stronger alignment across teams and leadership to succeed.

Through investigating SME feedback, I also saw that trust in AI systems is not only about output quality, but about how confident stakeholders are in the people using them. Designing for AI includes supporting appropriate levels of trust and control, not just improving recommendations.

Finally, this experience highlighted the importance of understanding existing tools and ownership dynamics. Introducing a unified solution in a fragmented ecosystem can create resistance if it challenges established ways of working without clear alignment.

Check out more projects

How a panel redesign helped Sales and Customer Success teams avoid critical setup errors

I redesigned a complex admin tool used daily by Sales and Customer Success teams to configure enterprise client settings. The legacy system made critical errors easy. I rebuilt it around clarity, feedback, and safer workflows, reducing support needs and user stress.

Drop in support tickets tied to misconfiguration

New CSMs reporting higher confidence earlier

How I've helped QA users learn more confidently with an AI assistant.

I led the design for the 0-to-1 launch of QA’s learning assistant at the beginning of 2025. Created to reduce friction and support learning momentum, Ela is now adopted by 80+ client accounts and holds a 4.5/5 user satisfaction score.

Avg. rating 4.5/5: Strong validation for a beta release

How I redesigned navigation to keep learners on track.

I designed a universal sidebar to unify all content types during a major content merger. Learners can now track their progress without leaving the lesson, reducing distractions.

53% drop in users exiting lessons to track progress

28% reduction in fullscreen toggling to study

How I simplified and unified a misaligned Text Lectures experience to support a major content merger.

I transformed fragmented Text Lectures into a unified, intuitive experience — resolving usability and progression issues to protect learner engagement and retention during Circus Street’s post-merger transition.

Resolved 5 out of 5 major UX inconsistencies: addressed and resolved all identified UX debt.

How I gamified learning at Cloud Academy boosting user motivation and MoM cashflow.

I designed a 3-month challenge that increased engagement, reduced churn, and boosted cashflow. 20% of participants stayed active three months after—well beyond typical subscription periods.

+18% increase in MoM upfront cashflow