Accelerating B2B Sales at QA with an AI powered Content Recommender

I designed an AI assistant that helps sales reps recommend learning content with confidence, reducing SME dependency and speeding up deals.

Audio overview

MY ROLE ON THE PROJECT

I led the product design work from early discovery to rollout. I worked closely with Product Manager, Sales, CSM and Data teams to map out user journeys, define metrics, and prototype an experience tailored for B2B reps. I owned the UX strategy and interaction design.

We needed a faster way to recommend content

To hit our 25/26 financial goals, we needed to accelerate deal velocity and reduce lead drop-offs. But sales teams faced three major blockers

For most B2B deals, reps relied heavily on SMEs (Subject Matter Experts) for content recommendations, but SME availability was limited, causing delays and lost momentum.

BEFORE AI CONTENT RECOMMENDER

How might we help reps recommend relevant content without relying so heavily on subject matter experts?

The solution was to create an AI powered content expert, designed specifically to help sales reps recommend the right content with confidence.

I designed a flow that allowed reps to:

To make sure Ela delivered value quickly, I designed for fast activation, so reps could benefit from it from the very first use.

AFTER AI CONTENT RECOMMENDER

Meet ElaX Sales! Built for flexibility, speed and trust.

From a UX perspective, I designed three input modes to support different rep working styles and experience levels:

Below is the guided Q&A flow, the first one we released as it felt the safest.

This allowed us to observe user preferences and test which input types generated more accurate AI outputs. Designing for both usability and input fidelity improved Ela’s value across varied workflows.

Some interesting early reactions

Users struggled adjusting to a probabilistic system

One unexpected insight: reps were frustrated by AI's non-deterministic nature. Getting even slightly different results from the same input felt unreliable to users accustomed to fixed systems. This exposed a core challenge in AI design: expectation management.

SMEs had some very valid concerns

To build trust early, we asked reps to validate Ela’s suggestions with Subject Matter Experts. Over time, a deeper trust gap emerged. The issue wasn’t trusting Ela, but trusting reps to iterate confidently and know when human expertise was needed. As a result, many defaulted to waiting for SME approval, which led to a drop in Ela adoption after launch.

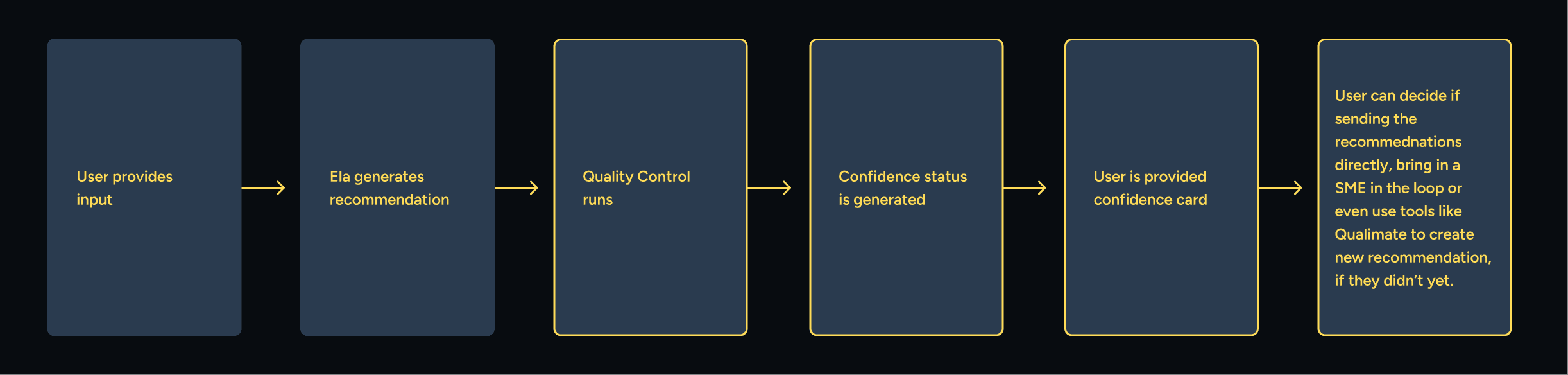

I designed trust signals to support better judgment

I introduced a trust signal layer. Ela now checks input completeness and flags whether a recommendation is safe to share, somewhat safe, or requires expert review. This balances speed with accountability, helping reps move faster and supporting their judgment.

And motivated feedback making impact visible

We also experimented with a lightweight feedback loop. After each recommendation, reps could rate its usefulness or flag concerns, but adoption was low. To encourage participation, I designed a motivational nudge that showed how their input would improve Ela’s accuracy over time. The next iteration will introduce a percentage-based improvement signal to make that impact more tangible. While still early, this lays the foundation for long-term motivation through visible progress.

Results and impact so far

What I Learned

This was a B2B sales enablement experiment, built under tight constraints and at speed. Even so, it became a meaningful opportunity to deepen my experience designing an internal tool with measurable commercial impact.

A key learning was that AI familiarity doesn’t equal trust. Many reps still expected deterministic behavior and perceived AI’s variability as unreliable, even in a digital-first, learning-focused environment.

That insight changed how I thought about adoption. I realised that building trust in AI isn’t just about polished interfaces or familiar UX patterns, but about setting the right expectations, working with existing team culture, and helping people understand when they can rely on automation and when a human should step in.

Check out more projects

How a panel redesign helped Sales and Customer Success teams avoid critical setup errors

I redesigned a complex admin tool used daily by Sales and Customer Success teams to configure enterprise client settings. The legacy system made critical errors easy. I rebuilt it around clarity, feedback, and safer workflows, reducing support needs and user stress.

Drop in support tickets tied to misconfiguration

New CSMs reporting higher confidence earlier

How I've helped QA users learn more confidently with an AI assistant.

I led the design for the 0-to-1 launch of QA’s learning assistant at the beginning of 2025. Created to reduce friction and support learning momentum, Ela is now adopted by 80+ client accounts and holds a 4.5/5 user satisfaction score.

Avg. rating 4.5/5: Strong validation for a beta release

How I redesigned navigation to keep learners on track.

I designed a universal sidebar to unify all content types during a major content merger. Learners can now track their progress without leaving the lesson, reducing distractions.

53% drop in users exiting lessons to track progress

28% reduction in fullscreen toggling to study

How I simplified and unified a misaligned Text Lectures experience to support a major content merger.

I transformed fragmented Text Lectures into a unified, intuitive experience — resolving usability and progression issues to protect learner engagement and retention during Circus Street’s post-merger transition.

Resolved 5 out of 5 major UX inconsistencies: addressed and resolved all identified UX debt.

How I gamified learning at Cloud Academy boosting user motivation and MoM cashflow.

I designed a 3-month challenge that increased engagement, reduced churn, and boosted cashflow. 20% of participants stayed active three months after—well beyond typical subscription periods.

+18% increase in MoM upfront cashflow